Transactions and Data Integrity

1.1. What is a Transaction?

A transaction is a single, logical unit of work that either fully completes or completely fails. The primary goal of a transaction is to ensure data integrity. To be reliable, a transaction must adhere to the ACID properties previously introduced meaning that a transaction completes entirely or fails completely after validating business rules.

In Mendix, a transaction is managed by the Runtime and encompasses the entire execution of a top-level Microflow and its associated sub-microflows, collectively referred to as a microflow hierarchy or call stack, ensuring that all operations across the hierarchy are committed as a single unit.

1.2. The Critical Role of Transactions in Enterprise Apps

Understanding transactions is not optional; it is the boundary between a simple prototype and a robust enterprise system. As soon as an application matures beyond basic data entry, transactional logic becomes the primary defender of your system's integrity for three "in-your-face" reasons:

1.2.1. Enforcing "All-or-Nothing" Integrity

In enterprise logic, a single business action (e.g., "Fullfill Sales Order") often requires symultanous atomic updates across multiple entities to remain valid. This involves simultaneously allocating inventory, updating the customer's credit limit, and changing the order status to "Dispatched.". Data integrity in this context is not merely about technical error handling, but about the enforcement of all business rules: ensuring the system never persists a state that violates business logic.

If a business rule fails halfway through an operation (for instance, the customer's credit check fails after the inventory has been allocated), the transaction ensures that all preceding steps are discarded. Without this "all-or-nothing" enforcement, the database becomes a collection of inconsistent state fragments; partial records that no longer reflect an accurate business reality. This level of data integrity ensures that the system transitions exclusively between logically consistent states, preventing the emergence of orphaned data or contradictory entries that undermine the reliability of the enterprise system.

1.2.2. Concurrency Control and Data Conflicts

By default, Mendix employs a "Last User Wins" strategy, which can result in inadvertent data loss during simultaneous edits. By enabling the Optimistic Locking capability (considered a core part from Mendix 11 MTS), the platform prevents a commit if the underlying record has changed since it was retrieved. Implementing this requires a sophisticated approach to transaction management to handle ConcurrentModification exceptions; without it, users may experience unhandled system errors or the frustration of lost work.

1.2.3. Preventing "Ghost Success" in Integrations

When Mendix orchestrates external APIs, developers must manage data integrity across two distinct scenarios based on the "Point of No Return" within the transaction:

Scenario 1: API Execution Prior to Local Commit

If an API is called before the local transaction is committed, the database acts as an internal safety net. If the external service fails or returns an error, the Mendix transaction allows you to revert all local changes instantly. This ensures that your internal data remains consistent with the fact that the external integration was unsuccessful.

Scenario 2: API Execution After Local Commit

If the API is called after a successful local commit, the transaction has already closed, and the stored data can no longer be rolled back if the API call subsequently fails. This creates a high-risk state where the internal database reflects a "Success" while the external system remains unchanged. To resolve this "Data Divergence," developers must implement robust object state management, such as a "Pending" status, and Compensating Transactions (e.g., an automated 'Undo' or 'Delete') to programmatically re-align the two systems.

Without these controls, the business faces a significant reconciliation burden, as the internal system of record will no longer match the state of the external ecosystem.

1.3. A Basic Explanation of Transactions

1.3.1 The Relevant Memory Components for Transactions

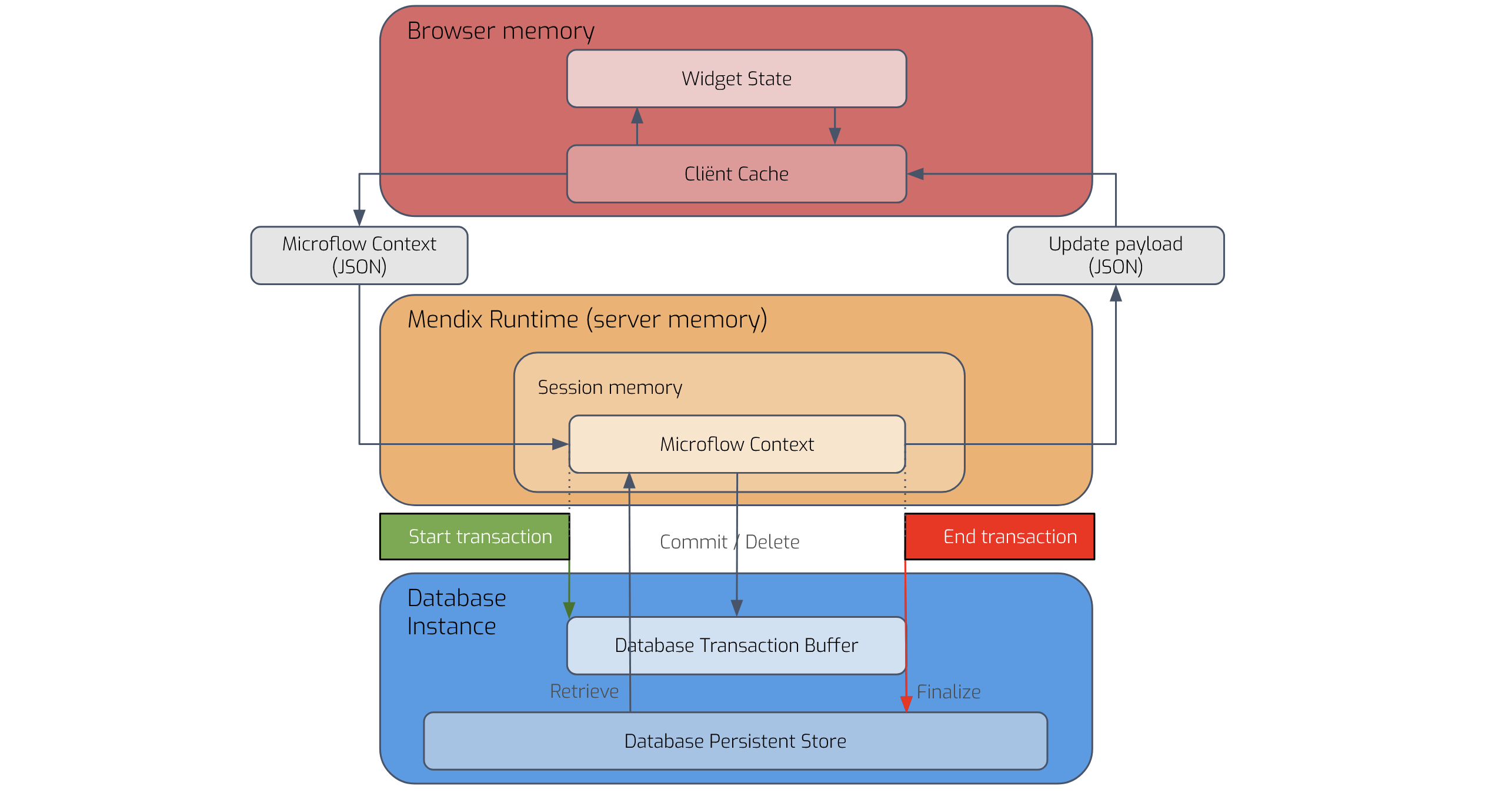

In order to understand transactions, it is necessary to know that a transaction mechanism uses four memory compartments in order to implement ACID principles:

- Client Cache: the memory component of the browser (client) where all user-made changes are stored. The Client Cache is updated with the contents of Microflow Context depending on the actions taken by the server.

- Microflow Context: the memory on the Mendix Runtime Server containing the object state. This part is memory bound to the executing microflow hierarchy. Typically this microflow hierarchy receives changes from a touchpoint (Page, scheduled event or published API). The Microflow Context contains the changes made to objects by microflow actions. The data in this compartment is private for the running microflow hierarchy, meaning that no other microflow hierarchy has access to this data.

- Database Transaction Buffer: the SQL Transaction opened by the Mendix Runtime. It can be seen as a memory component of the database which contains all temporary changes that the Microflow context has written to the database by executing a ‘commit’ action.

The Database Transaction Buffer is private for the current microflow context. I.e. it is not possible that another microflow hierarchy has access to the Database Transaction Buffer of the current microflow hierarchy.

What can be confusing is that a Mendix ‘commit’ action is not the same as the SQL COMMIT command. A Mendix commit action actually translates to a SQL INSERT or UPDATE statement.

The SQL COMMIT command, on the other hand, permanently applies all changes in the database transaction buffer and is effectively equivalent to reaching the end microflow event of the top-level microflow in a hierarchy if started from a page or if the scheduled event / API action is completed successfully.

To avoid confusion with Mendix terminology, this article uses the term ‘persist’ to refer to the permanent application of all changes, called Transaction Commit by Mendix.

- Database Persistent Store: the disk-based storage component of the database which contains all permanent changes that are written to the database. The data in this component is public, meaning that the data is accessible to other user sessions.

1.3.2 The Transaction Lifecycle

The transaction lifecycle in Mendix has the following steps:

- Start: A transaction begins at the moment a top-microflow of a hierarchy is called from a touchpoint (Page, Scheduled event or published API). This results in;

- The creation of a Microflow Context

- The creation of a Database Transaction Buffer.

- Changing the Microflow Context: The called microflow modifies one or more objects by executing microflow actions such as Create and Change. These changes stay solely in the Microflow Context as long as a ‘Commit’ is not executed.

A ‘Delete’ microflow action behaves differently than a ‘Create’ or ‘Change’ because a ‘Delete’ action does not have the option to remove the object from the Microflow Context alone! If a ‘Delete’ action is executed for an object that was created in the same transaction and never committed, it is simply removed from the Microflow Context. If it exists in the Database Persistent Store, a delete command is sent to the Database Transaction Buffer and the object can no longer be retrieved by the Microflow Context.

- Writing changes to the Database Transaction Buffer: A changed object is written to the Database Transaction Buffer at the moment a Mendix Commit is executed on an object within the Microflow Context. This means the data can now be retrieved with a ‘Retrieve from database action’ provided this action is executed within the same active microflow hierarchy (Microflow Context).

- Undoing changes to the Microflow Context: Changes to an object in the Microflow Context can be undone by executing the ‘Rollback’ action. A Mendix Rollback action reverts the object to its state at the last commit point. Therefore, if the object was never committed during the current transaction, it reverts to its state in the Database Persistent Store. If it was committed once during the microflow execution and then changed again, it reverts to its state in the Database Transaction Buffer.

A Commit action can not be reversed within the Microflow Context. A Rollback action will not undo the commit action. The only way to reverse a commit action is to raise an unhandled exception that will undo all changes made to the Database Transaction Buffer and the Microflow Context.

- End: The transaction ends when the top-level microflow of a hierarchy finishes normally (i.e., the end event of the top-level microflow is reached)or when an unhandled exception is thrown. However, the effect of a normal close or a thrown exception on the object state is quite different:

- Normal end: All changes and flagged deletes in the Database Transaction Buffer are permanently and irreversible written to the Database Persistent Store making the changes visible for other user-sessions. If a Page initiated the transaction, the content of the Microflow Context is synchronized to the Page. Finally, both the Database Transaction Buffer and Microflow Context are destroyed.

- Unhandled exception: The Microflow Context and the Database Transaction Buffer are destroyed meaning that all changes and flagged deletes in the Database Transaction Buffer are lost and no Page synchronization occurs.

1.4. Implementing Data Integrity with the Transaction Pattern

Data integrity is vital because it ensures information is accurate, consistent, and trustworthy.

Reliable data supports compliance, accountability, and confidence in systems and reports. This section addresses the order of data changes in order to support data integrity.

1.4.1. The ideal data-flow

In order to implement Data Integrity, the order in which the transaction lifecycle should be structured/designed is:

- Apply changes to objects

- Validate all changed objects in the Microflow Context as one coherent collection.

- Persist all changes of the coherent collection if no validations fail to make them irreversible permanent and public.

- Undo all object changes made by the Microflow Context to the coherent collection if any validation fails. This ensures that the objects are reverted to their original state. Remark: the objects must return to the state they were in before the transaction started, which is achieved by ensuring that no ‘Commit’ actions were performed on the coherent collection prior to the validation step.

1.4.2. Why Data Integrity is Difficult to Implement in Mendix

While Mendix best practice dictates performing all validations in memory before persisting changes to the database, implementing this at scale presents significant technical hurdles. This section evaluates the structural barriers in Mendix that impede transactional isolation and state consistency. Specifically, we examine how the platform lacks a native mechanism to manage intermediate object states.

The Intermediate Commit Trap

The Mendix Create, Change, and Delete actions allow for staging objects in the current database transaction by setting the 'Commit' option. While this triggers Domain Model-level checks, it does not inherently validate complex functional business rules. Because Mendix does not support "un-committing" specific objects within a transaction, any failure to meet business criteria after a commit has occurred forces a choice: either manually reversing the changes or triggering a full transaction rollback via an exception.

To ensure a seamless user experience, the Transaction Buffer should not be used as a workspace for intermediate changes (scope). Instead, objects should remain uncommitted while being validated against business logic. This allows the application to provide Validation Feedback to the user without the risk of persisting inconsistent data or necessitating a disruptive system rollback.

The State Management Burden

Since the Database Transaction Buffer is not suitable for storing intermediate changes, all modifications must be held within the Microflow Context.

However, If you have a large microflow hierarchy where multiple objects are changed and created, developers must implement custom logic to track these changes for validation and commit purposes. While this is manageable for a simple microflow involving a single object, it becomes a significant burden in complex, nested microflow hierarchies. Mendix does not provide a native mechanism to "gather" all mutated objects across different levels of logic for a final, unified validation and commit action.

Consequently, developers must implement custom logic to track these changes as they move through the call stack. This is the origin of the scope concept in the Menditect Testability Framework: a way to collect all ‘dirty’ objects to ensure that they are validated as a single unit of work.

Furthermore, in stead of a capability with which all changed objects could be transferred to the Database Persistent Store, developers must manually implement a ‘commit action’ for each changed object after the validation step (refer to the CMT microflow pattern in the Orchestration typologies) to ‘save’ each validated object to the Database Transaction Buffer which is labour-intensive and error-prone.

The State Undo Deficit

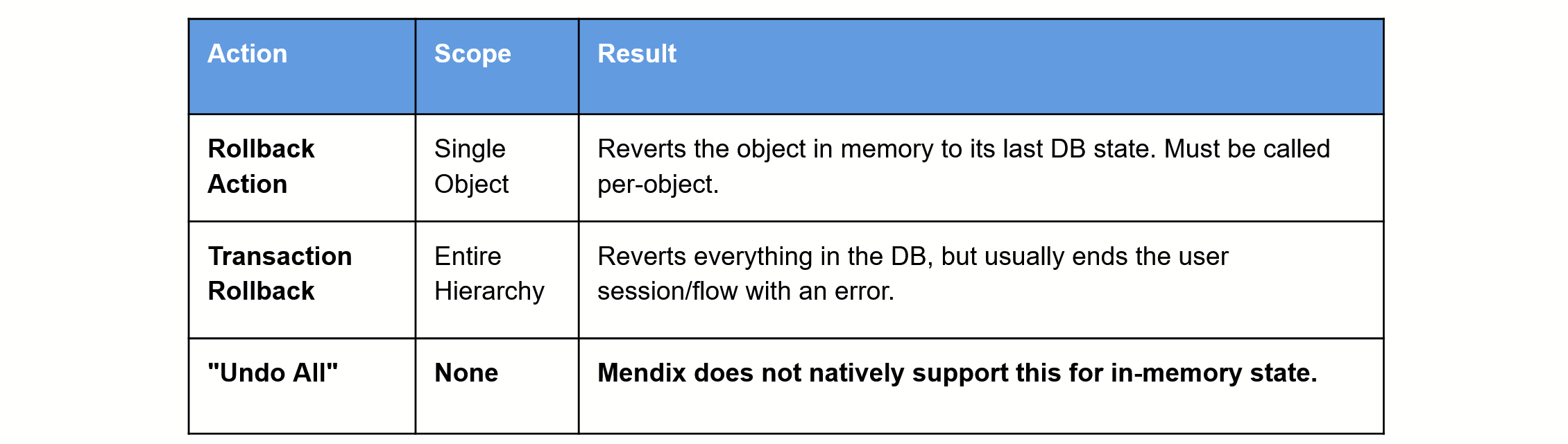

The ideal data-flow, as described in Section 1.4.1, implies that a validation failure should revert all object modifications and deletions within the microflow hierarchy to their initial state.

However, Mendix does not provide a ‘Global Undo' or 'State Reversion' action capable of reverting the entire set of in-memory changes across a call stack to their initial state. While a Rollback action can revert an individual object to its last committed state in the database, it is fundamentally incapable of returning an object to the specific state it held at the moment the microflow hierarchy was initiated if that object was already in a modified state.

Consequently, there are only two paths to achieving this initial state:

- Throwing an Error: This induces a full Transaction Rollback, but at the cost of suppressing user-friendly validation feedback.

- Manual Reversion Logic: Implementing specific logic for each object in the collection to manually restore its previous values, which is necessary to maintain data consistency while still providing feedback to the user.

The Client-Contamination Problem

In the Mendix architecture, the Single Source of Truth (SSoT) for object state is dynamic, shifting between the browser (Client Cache) and the Runtime (Server) depending on the execution context. While a user interacts with a page, the Client Cache maintains the SSoT. However, when a microflow is triggered, the Runtime assumes authority over the object state. The "Client-Contamination Problem" emerges during the synchronization phase at the end of this request.

When an object is mutated (for example, when a total order amount is calculated after adding a new order line) during the execution of the microflow but a subsequent validation results in a failure, the application becomes susceptible to two primary negative outcomes:

- Synchronization of 'Dirty' State: If no explicit microflow rollback action is implemented, the Runtime pushes this inconsistent, "dirty" state to the Client Cache. This results in the UI displaying data that the server has changed but then rejected. In the orderline example, this results in an incorrect total order amount displayed in the browser.

- Destructive Rollbacks and 'Ghost' Objects: To mitigate contamination, developers may trigger an explicit rollback action (not to confuse with implementing a rollback error handler). While this reverts the object to its last committed state in the database, it is a destructive operation that wipes out all valid, uncommitted user inputs provided during the session. Furthermore, if the microflow receives a newly created object, a rollback removes the record from the Runtime. Because the subsequent synchronization to the Client fails to purge the client-side reference, it results in a 'ghost object': a record that persists in the UI but lacks a corresponding identity in the Runtime, rendering it impossible to commit or process further. In the orderline example: if a newly created line is rolled back, it remains visible in the browser's list or form, but any attempt to interact with it will trigger a system error.

Because the platform lacks a native transactional buffer to isolate the Client Cache from failed server-side side effects, developers are forced into "manual decontamination" meaning; laboriously resetting objects or refreshing views to re-establish a reliable state. Notably, setting the 'Refresh in client' option to false on microflow actions does not inhibit this behavior; the Runtime will still synchronize the final state of the Microflow Context to the Client Cache at the end of the execution cycle.

The Auto Committed Objects Issue

An auto commit occurs when the Runtime is forced to assign an ID to an object to maintain a relationship (e.g., showing a child object in a reference selector before the parent is saved). The mechanism is complex and can lead to issues on session time out or crash.

1.5 Conclusions: Architecting for Integrity

Understanding the theory of Mendix transactions is only the first step; the real challenge lies in navigating the pitfalls that emerge during implementation.

As this analysis shows, the disconnect between the Database Transaction Buffer and the Microflow Context makes relying on native platform behavior a risky strategy for maintaining data integrity. To build truly resilient enterprise applications, developers must look beyond the default "all-or-nothing" safety net and implement deliberate patterns, like those found in the Menditect Testability Framework, that isolate validation logic from the persistence cycle.

Only by acknowledging these architectural gaps can you move from merely building apps to engineering robust, integrity-first systems.